In this short article, you will learn how to test event handlers, such as button clicks, with React Testing.

First, we will create a simple component to test:

import React from "react";

export default function ButtonWrapper({ title, ...props }) {

return <button {...props}>{title}</button>;

}

This ButtonWrapper component takes in a title prop and any other props and returns a standard JSX button element.

Now, create a testing file with the same name as the component file and a .test.js extension (or .test.tsx if you are using TypeScript) (i.e. ButtonWrapper.test.js)

First, import the following from React testing and import the component:

import React from "react";

import { render, screen, fireEvent } from "@testing-library/react";

import ButtonWrapper from "./ButtonWrapper";

Now, create the test and give it a name (i.e. "handles onClick")

test("handles onClick", () => {

})

Render the ButtonWrapper component:

render(<ButtonWrapper title={"Add"} />);

We will add an onClick property to the button and call the jest.fn() function whenever the component is clicked:

const onClick = jest.fn();

render(<ButtonWrapper onClick={onClick} title={"Add"} />);

jest.fn() is a function created by Jest which tracks how often it is called. In other words, it will keep track of how many times the button component is clicked.

Now, we will get access to the button and click it using fireEvent.click():

const buttonElement = screen.getByText("Add");

fireEvent.click(buttonElement);

fireEvent.click() simulates a click on the button element.

Next, we will write an assertion for how many times the button has been clicked. First, we will write an inaccurate assertion:

expect(onClick).toHaveBeenCalledTimes(0);

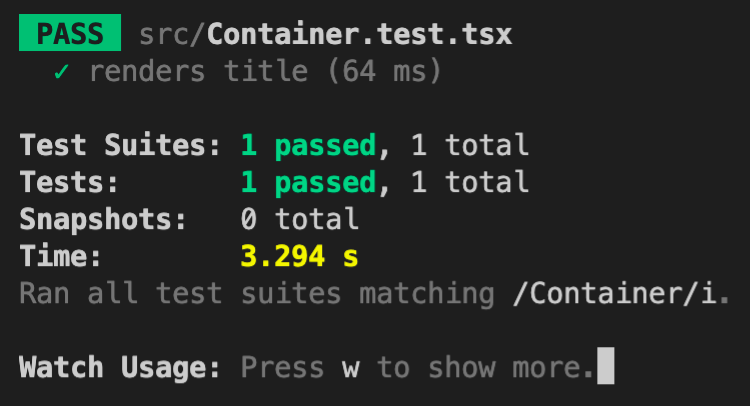

Now, we will run our test:

yarn test

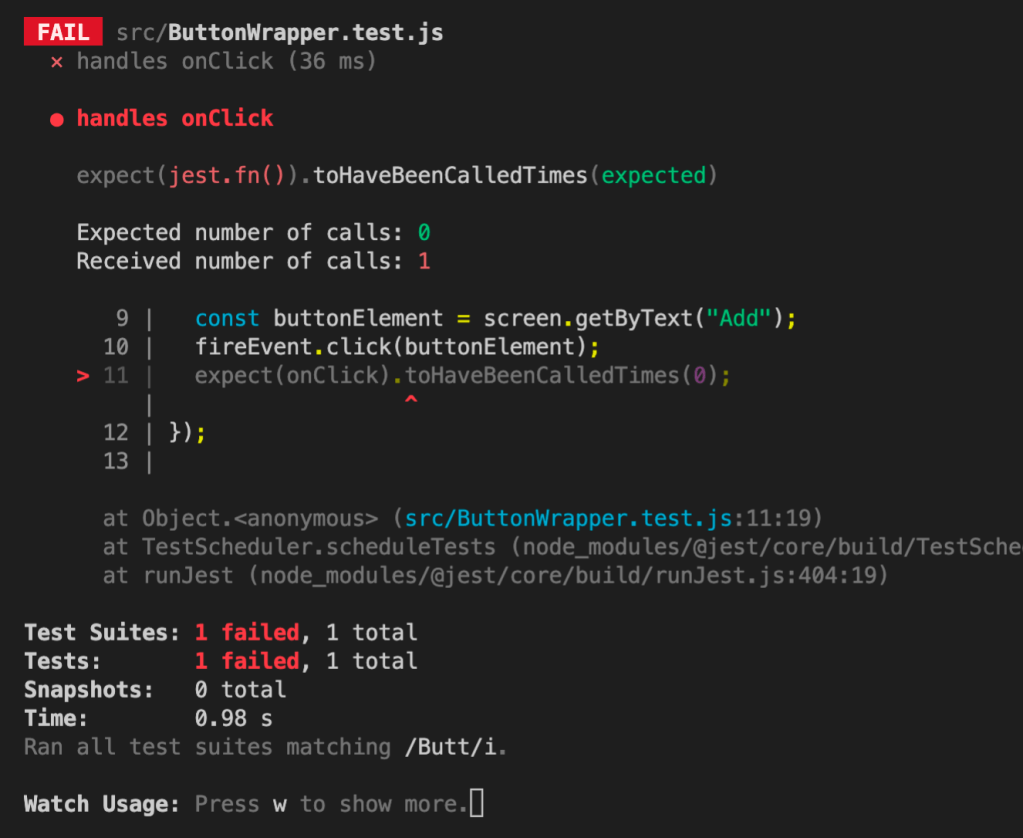

This test will not pass because we know that the button was clicked once by the fireEvent call. The output should look like this:

Basically, React Testing is saying that it expected 0 calls to be made, but it received 1 call.

Now, let’s make a correct assertion:

expect(onClick).toHaveBeenCalledTimes(1);

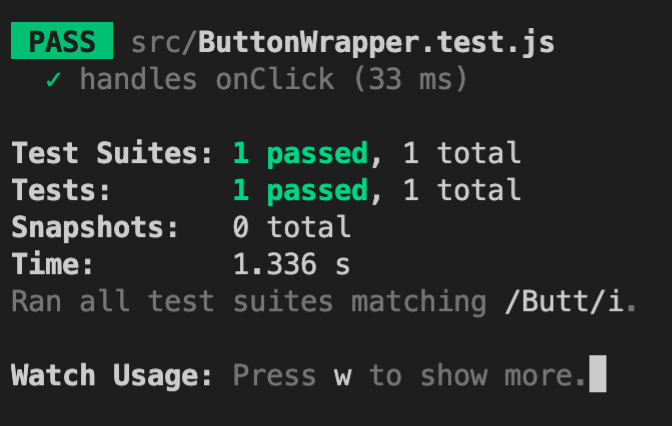

The output should look like this:

Here is the final code:

import React from "react";

import { render, screen, fireEvent } from "@testing-library/react";

import ButtonWrapper from "./ButtonWrapper";

test("handles onClick", () => {

const onClick = jest.fn();

render(<ButtonWrapper onClick={onClick} title={"Add"} />);

const buttonElement = screen.getByText("Add");

fireEvent.click(buttonElement);

expect(onClick).toHaveBeenCalledTimes(1);

});

Now, you can test event handlers in React Testing. Thanks for reading!